Great stories aren’t owned – they are share. Brand owners ignore this at their peril.

One of the defining qualities of a truly powerful story is that others want to retell it. In the act of retelling, stories inevitably change. They are adapted, embellished, and localised. This is one of the strengths of storytelling.

We see this in myths and legends. Stories travel across cultures, changing as they go. For instance, many societies have flood myths with strikingly similar elements – angry gods, chosen survivors, and the release of a bird to test whether the waters have receded (often referred to as the ‘bird scout’ motif). The core idea persists, but each culture makes it its own.

I often see this at conferences, where a story told on stage at one event is retold later, often with different details. One example is the story of the angry father who learned of his daughter’s pregnancy via the product recommendations she received – a story that most likely started out only as a hypothetical, before being translated into an ‘event’.

A story becomes more powerful precisely because it is no longer fixed – it is alive.

Fiction creators understand this. The popularity of a story is often measured by the fan fiction it inspires. Countless tales have been written about Sherlock Holmes that were never penned by Conan Doyle, including less obvious ones such as the TV series House (the clue is in the name – geddit?).

Entire fictional universes, from science fiction franchises to fantasy sagas, have expanded far beyond their original authors because fans wanted to continue the story. Were it not for E. L. James’ love of the Twilight novels we would not have 50 Shades of Grey (who do you think Mr Grey was based on …?). The worlds of science fiction are especially ripe for this phenomena, as seen through the thousands of stories that fans have written in the universes of Star Wars, Star Trek, and Doctor Who.

Brands are no different.

Two decades ago, the rise of user-generated content clearly showed how brands were no longer controlled solely by marketers. Today, thousands of creators and influencers tell brand stories every day, through videos, reviews, memes, and social media.

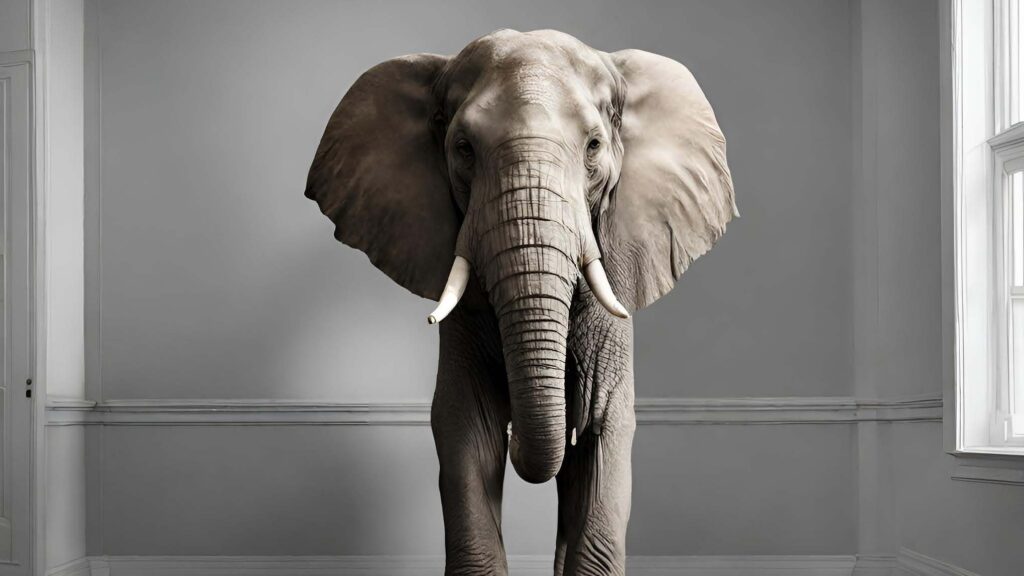

This creates a paradox for brand owners: they are not really in control, yet they are still responsible.

In reality, a brand exists only because people believe in it. A brand with no fans is just a name. A brand with millions of fans is a cultural force shaped as much by its community as by its originators and legal owners.

For anyone building a brand, the notion that others want to tell you story for you is not something to control – it is something to nurture and celebrate – and learn from. Third party stories are in some ways a better reflection of the brand than its owners themselves might see – it is a representation of the brand as others see it.

Understanding how the brand story changes through retelling can provide a clear indication of what parts of the story work most strongly, and a good indicator for areas of further development.

Letting go of control is hard for marketers. It requires courage, and careful stewardship. While brands cannot own their stories, they can influence them by clearly articulating their values. These values become the brand’s “canon” – the principles that guide how the story should evolve.

When brands betray that canon, fans notice, and react fiercely (search for the term ‘Holdo Manoeuvre if you’re keen to dive into a rabbit hole for how fandoms respond to such transgressions).

Fans are loyal, and brands crave loyalty. But loyalty is not a transaction – it is a relationship. Take it for granted, and you will discover that a betrayed fan can become your most relentless critic.

Yet when brands stop trying to control the story and instead choose to listen, learn, and participate in it, something extraordinary happens. The stories told by customers, creators, and communities often illuminate the true meaning of a brand far more clearly than any boardroom strategy ever could.

These third-party stories reveal what people actually care about, what they cherish, and what they are prepared to carry forward. They are not noise – they are the brand in its most authentic form.

So the task of brand building is not to lock the story down, but to set it free with intention.

If people want to tell your story for you, that is not a risk to manage – it is the highest form of success.

Because great brands don’t just make customers. They create fans.

And it is fans – not marketers – who turn brands into legends.